My Harness: How I stopped babysitting AI and went kitesurfing

Speed without structure is just a faster way to get lost. Here's the harness I built to coordinate AI agents across a Rails codebase — from brainstorming to shipping — so I could stop babysitting and go kitesurfing.

When I first started coding with Claude, I was terrified. Features appeared in minutes. Claude reported that everything was coded, working and ready for my manual QA. Wow! But then I opened the browser, checked the code, and wanted to throw my laptop out of the window.

When you encounter a vague ticket, you slow down. You ask questions and clarify the task. Sometimes, you document your findings. AI doesn't have this problem. It just makes assumptions and carries on until the task is complete. It produces more and more code. If a requirement is difficult to meet, it produces stupid workarounds. And it creates a lot of technical debt.

Speed without structure is just a faster way to get lost.

My LinkedIn feed is basically all about how AI is making everyone 10 times more productive. But here's what they're not telling you: this increase in productivity is conditional. As Obie Fernandez put it at the Ruby Community Conference in Kraków, you only get the velocity if you know what good code looks like. Otherwise, you just end up with 10 times the mess. He said something that has stuck with me:

We're already in the singularity.

The old rules no longer make sense. The CEO of Shopify is running AI agents against his own infrastructure on a Wednesday afternoon and posting terminal screenshots. We can produce more code than ever before. Engineering is no longer a bottleneck. The problem now is deciding what to implement and why. That's why it's crucial to be a product engineer and bring value to the product.

The coordination problem

In the good old days, I used to work in an office. Five developers in the same room. We talked to each other and reviewed each other's code. We had a collective knowledge of the code and product we were working on. If I encountered something strange, I could always ask, "Hey, what's this?".

Now, imagine that my colleagues are AI agents. They might talk to each other, but they forget about it as soon as they've finished the task. Each one wakes up fresh, reads whatever they're instructed to read, then starts writing code. They have no idea who or what to trust. Each one picks a direction and starts running confidently. They don't know that this piece of code is legacy, and they'll confidently repeat it wherever they think it fits.

Without a process, you end up with five different opinions on every possible topic. Each of them confident that they are doing it right.

Obie calls this the coordination problem. Processes exist to reduce uncertainty and coordinate humans. Today, they also need to coordinate the work of AI agents. He's approaching this from his extensive experience in Extreme Programming — articulate intent in small increments, check each other's work, keep the feedback loop tight. The same instincts that made pairing powerful also apply to coding with AI.

The question is how you encode this discipline when your team includes AI agents.

Harness engineering

Three engineers. One million lines of code. 1,500 pull requests. Zero lines written by hand.

OpenAI calls what they did 'harness engineering'. They did not write code and they did not review PRs. Instead, they designed an environment and observed the outcome. When something went wrong and agents were drifting, the solution was never to "try harder" or "tweak the prompt". It was always: 'What capability is missing, and how can we make it legible and enforceable for the agent?'

Their team used to spend every Friday cleaning up 'AI slop' — 20% of their week just fixing agent drift. That didn't scale. So they encoded 'golden principles' into the repository and built recurring clean-up agents that scan for deviations and open refactoring pull requests at regular intervals. Garbage collection for code quality.

This matches my experience exactly. The discipline shows up in the scaffolding, not the code. Quality is determined by conventions, verification gates and review pipelines. The agents are the hands, and the harness looks at them like a Big Brother, verifying them. Humans stop writing code and start writing the rules that the code must follow. They design constraints and create harnesses — the system that builds the system.

And here's what matters for teams: the harness standardises everyone. One team encodes their rules as skills and ensures they're followed using hooks and automated code reviews. Another team doesn't. The former gets clean interfaces. The latter has logic scattered across controllers and views. The team that figures this out first will outperform everyone else by far.

What follows is my full workflow. It builds on skills and hooks (Post 1), CI and code review (Post 2), and Rails conventions (Post 3). If you want the deep dive on any piece, start there.

How do I use Claude Code, aka my Rails harness

Here's the theory. Now to the keyboard. I'm CTO of a Rails app where I'm the only developer. I built this harness because I was the bottleneck. I also run a software house where we use it daily across client projects. This is how we ship.

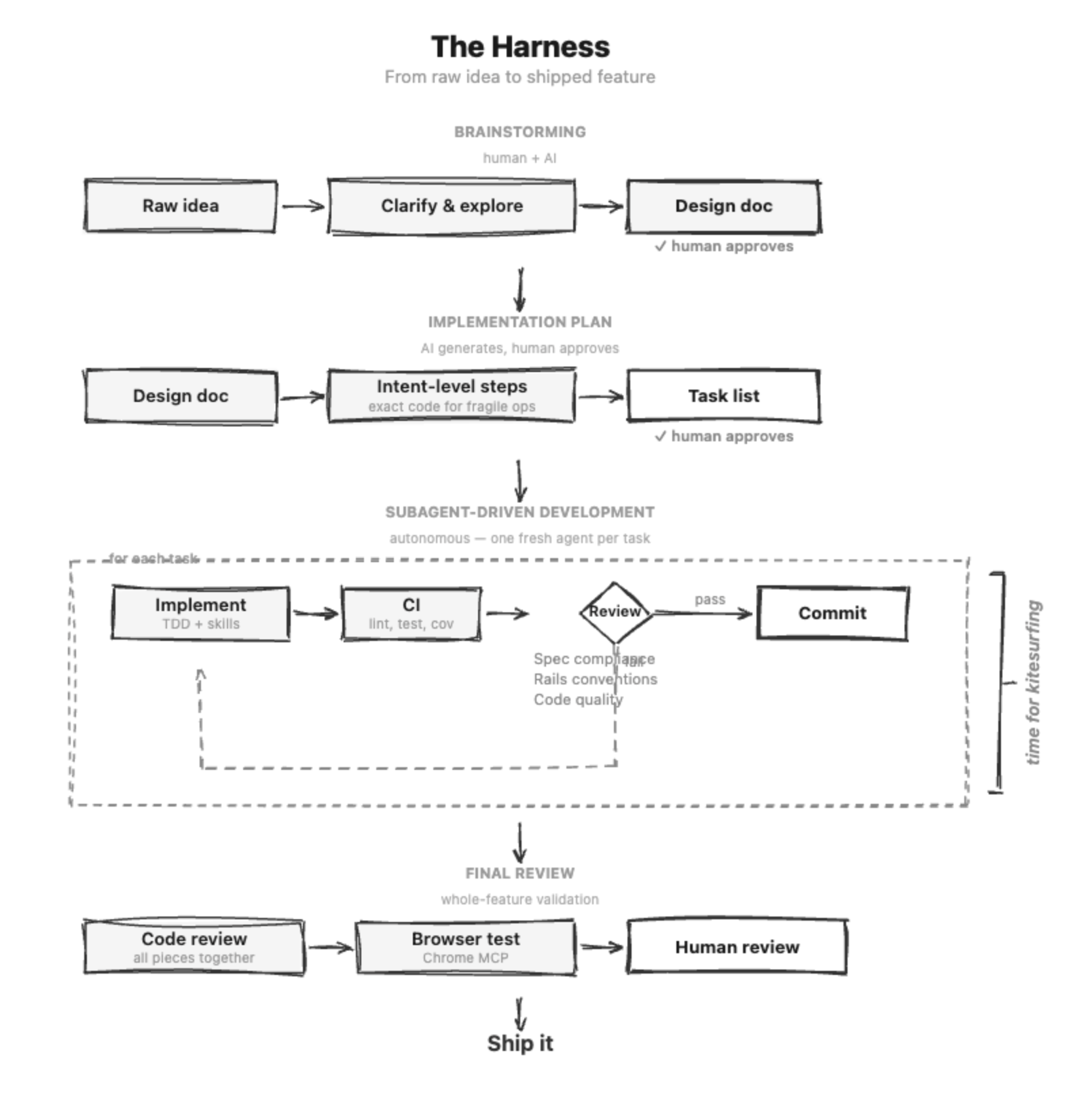

Brainstorming

I'm using the brainstorming process from awesome superpowers by Jesse Vincent.

It usually starts with a raw idea - "Hey, I want to build a Discord notification system."

By this stage, I usually have a clear idea of what I want, and I want to explore my options. Before, it would only be me and a piece of paper. Now, however, I use Claude as a sparring partner and advisor.

The chief of product from one of the companies I work with is also using this prompt. We've slightly modified it so that he doesn't make any technical decisions. He passes a design document to me, and I fill in the technical details.

Now, let's take a look at how it works. The interactive process kicks in and follows this flow:

- Explore the context – understand the codebase and the current state of the project. The more context you can provide Claude with, the better. Imagine explaining to your past self how the new feature fits into the system. "When implementing Discord integration, look at the user model. It's tricky because some OAuth logic is in the service objects and some is elsewhere. You may need to change both; make sure you take a look and decide."

- Clarifying questions – Claude asks one question at time, mostly multiple choice

- Propose 2-3 approaches – all of which should be listed alongside their respective trade-offs and a Claude recommendation. While I appreciate the recommendation, I usually advise using your own judgement. Often, the recommendation misses the mark when I already have an extension in mind that I haven't shared with Claude, and the recommendation goes for the simplest possible solution.

- Present the design – section by section, getting my approval incrementally. Another chance to correct the flow and clarify the design.

- Write the design doc – save it to the file for future reference. I keep design documents in the app's repository.

- Spec review loop — a new agent and an automated reviewer validate it and make iterative fixes until it is approved. This process checks for logic issues and compares the design with the application code, flagging potential issues. It provides another pair of automated eyes before we start coding.

- Human spec review – I approve before moving on.

Although the design varies depending on the type of task, the concept is essentially a validated specification of what to build. It bridges the gap between "What do we want?" and "How do we want to implement it?".

It captures:

- The problem – what we're solving and why.

- The chosen approach — which option we picked and why. We keep the alternatives in the document for future reference, so that agents do not deviate from the chosen option.

- Key decisions and constraints — the boundaries, trade-offs and no-goes.

- What success looks like — how we'll know it's working. At this point, I will also write a scenario for the system specification. This is my litmus test to see if the feature's happy path actually works, and not just if Claude says it works. I also often ask Claude to take screenshots during the tests to check that the front end is rendered correctly and that everything looks cohesive.

Implementation plan

Once the design document has been approved, the writing-plans skill turns it into an implementation plan consisting of bite-sized tasks, each of which is a single 2–5 minute action following TDD.

I've modified the behaviour of superpowers here a bit. Originally, it was extremely detailed. It included exact code snippets that should be implemented.

Example instructions for validating the format of a video URL:

def video_url_format

return if extract_youtube_id(video_url)

errors.add(:video_url, "must be a valid YouTube URL (use youtube.com/watch?v=... format, Shorts links are not supported)")

end

But it doesn't make much sense for our harness. This is not what we're building here. Reviewing a few snippets for model validations is fine, but just imagine trying to review the entire implementation plan for a larger feature. It would basically be a review of code that hadn't even been run once, without linters or running tests. It's really tedious work, and I hated doing it.

So I switched to intent-level steps by default: "Add presence validation for email", "test status transitions". Plans are 3–5 times shorter and easier to review.

Here's an example instruction for video URL format validation:

Add validation for video url format, it should accept only links to youtube videos, shorts are not supported

But we can only do this because of what backs them up. The reason we can trust agents to figure out the how is convention skills. We have a full set of them: controller conventions, model conventions, testing conventions, policy conventions, migration conventions, and more. Each one encodes the project's actual patterns: how we structure controllers, what our test setup looks like, where validations go, how we handle authorization. When an executor agent picks up a task, it loads these skills and reads the existing codebase.

Hooks enforce this. They block the agent from editing files without loading the relevant conventions first.

This is the key shift: the intelligence for how to write code doesn't live in the plan anymore. It lives in the convention skills and the codebase itself. The plan just needs to say what to build and where.

However, not everything should be at the intent level. It's all about risk management. Get a migration wrong and you lose data. The rule is: use intent-level by default and exact code for fragile operations such as migrations, destructive operations, Stripe API calls and non-obvious configuration.

This is harness engineering in practice: the plan constrains what gets built, the convention skills constrain how, and the agent provides the intelligence to connect the two.

Implementation

Let's put our plan into action. Each task in the plan has its own subagent responsible for the coding.

The subagent follows a strict workflow: implement, request a review, run CI, commit and report back.

This is crucial because agents can start drifting. Including a review process for each task helps to identify problems early on. Depending on your codebase, even a small change to the model can cause half of your tests to fail (been there, done that). The sooner we identify issues, the better. There's nothing more frustrating than debugging a new feature with 3,000 lines of code. And you're not even debugging your own code!

I have a four-step review process (read about it in previous blog post):

- Spec compliance – a separate agent reads the plan and the actual code. It flags differences and checks that nothing is missing or extra.

- Code quality – general rules for writing the clean code.

- Rails conventions – does the code adhere to the patterns of your project? Is the business logic in the models? Are the controllers structured the way we want them to be? Agents should already follow these rules, but the more reviews you do, the more confident you can be that they are actually being followed.

- Local CI – depending on your setup, this may flag missing or failing tests. Add any deterministic checks that your application requires, such as RuboCop or Herb Linter. I highly recommend adding Undercover. This greatly reduces the number of untested methods and

no_method_errors.

If a reviewer identifies any issues, the implementer resolves them and then requests a review again until the reviewer gives their approval. They then move on to the next task.

By the time all tasks have been completed, each one will have been reviewed individually, tested continuously and verified against the plan at every step.

Final review

The individual tasks have been completed. But does the entire feature come together successfully?

Once all the tasks are complete, a final reviewer looks at the implementation as a whole. Do the pieces fit together? Is the naming consistent across the files? Does the controller match the model it communicates with? Does the requested system test pass?

Task-level reviews identify issues within each section. This review identifies issues between them.

At this point, I sometimes ask Claude to manually test the feature via Chrome MCP. This involves navigating the app, filling out forms and checking styling and JavaScript errors. Just confirm that the feature is working before handing it over to me.

Here and there fixes

Now it's time for manual QA, human code review and corrections. This is the first time I see the code. Depending on how good the specification was, it's either "five minutes and let's ship" or "oh, jeez".

I do review the code. If I find something I don't like, I stop and think about how I can improve the harness to avoid this problem next time. I don't babysit agents. I create better guidelines and constraints for them, we're constantly evolving. For example: I started using helpers for the views, but then I switched to ViewComponent because this makes the code easier to test, tech debt is contained in one place, and refactoring is easier.

Occasionally, the feature is useless. It works, but the code is complex and doesn't fit the domain as I expected. There are nasty workarounds here and there. Tech debt.

However, the problem wasn't Claude; it was me. I had the wrong understanding of the feature and navigated it in the wrong direction. Or I underestimated the scope. I treat situations like this as lessons. I learn what I can from the generated code and start again. More often than not, starting again is easier than trying to fix a piece of poor code that can't be fixed.

Try it

That's the harness. That's how I stopped babysitting the AI system and went kitesurfing. Build the system that builds the system, then step away from the keyboard.

With this approach, getting a mid-sized new feature from idea to production takes roughly 4 hours for a medium-to-large Rails application. One hour for planning and back-and-forth with the product team. Two hours for Claude. One hour for QA, code review and deployment. That leaves me with two hours to kitesurf (I also take long lunch breaks).

I incorporate the above workflow into the plugin that I use every day. It's built on top of superpowers by Jesse Vincent, which gave me the foundation to build from. You can install it directly into your Claude Code. Make sure you adjust the settings to match your project. You can read about my conventions here.

https://github.com/marostr/superpowers-rails

To get the best results, make sure you have the following:

- Local CI with my recommendations (see previous post) available at

bin/ci. - Rails project-specific convention skills (the plugin has my opinionated conventions).

- You are running Opus 4.6.

Begin with a brainstorming session, and then allow Claude to guide you through the rest of the process. Keep the feature as simple as possible and narrow down the scope. The code is much easier to review and QA when it's small and contained. You can always extend it in future iterations.

If you want help setting this up for your team, that's what we do at fryga.

On this blog I plan to cover the entire autonomous AI coding journey.

So far, we have covered:

- Post #1: Claude ignores my rules (skills + hooks = permanent ink).

- Post #2: does the output match what the tattoo says? (CI + review = verification)

- Post #3: what do you tattoo? (your app conventions)

- Post #4 (this post): wrapping it all together in a harness

I'd love to hear your thoughts. Reach out to me on LinkedIn or at marcin@fryga.io.